|

Machine learning techniques combined with sensor technology By Ajay Kapur, George Tzanetakis, & Peter F. Driessen University of Victoria's Music Intelligence and Sound Technology Interdisciplinary Center

Project Description and Goals

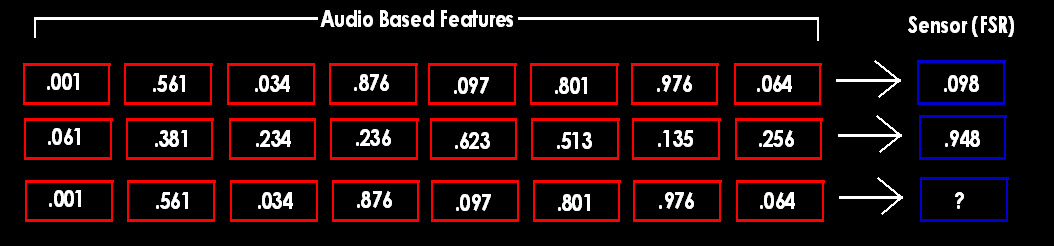

Using hyper instruments that utilize sensors to extract gestural information for control

parameters of digital audio effects is common practice. However, there are many pitfalls in creating sensor-based controller systems. Purchasing

microcontrollers and certain sensors can be expensive. The massive tangle of wires interconnecting one unit to the next can get failure-prone. Things that

can go wrong include: simple analog circuitry break down, or sensors wearing out right before a performance forcing musicians to carry a soldering iron

along with their tuning fork. The biggest problem with hyper instruments, is that there usually is only one version, and the builder is the only one that

can benefit from the data acquired. These problems have motivated our team to attempt to use sensor data so that sensors become obsolete. More specifically

we use sensor data to train machine learning models, evaluate their performances and then use the trained acoustic-based models to replace the

sensor.

More Information

For more information, experimental results, and to cite this work: The Electronic Sitar Questions Email: akapur@alumni.princeton.edu |

MISTIC